Audiobus: Use your music apps together.

What is Audiobus? — Audiobus is an award-winning music app for iPhone and iPad which lets you use your other music apps together. Chain effects on your favourite synth, run the output of apps or Audio Units into an app like GarageBand or Loopy, or select a different audio interface output for each app. Route MIDI between apps — drive a synth from a MIDI sequencer, or add an arpeggiator to your MIDI keyboard — or sync with your external MIDI gear. And control your entire setup from a MIDI controller.

Download on the App StoreAudiobus is the app that makes the rest of your setup better.

Comments

I don’t need to record automation, I just need to perform it. But recording would be good I guess.

I thiught I heard Stagelight had some midi out issues. And it doesnt have tempo changes. But it was mostly the first that I didn't think to mention. Hopefully you get the other answers on it, I havent looked since beta except to check re those two things.

I’m pretty settled on modstep.

You’ve all been a incredible!! What a community, thank you.

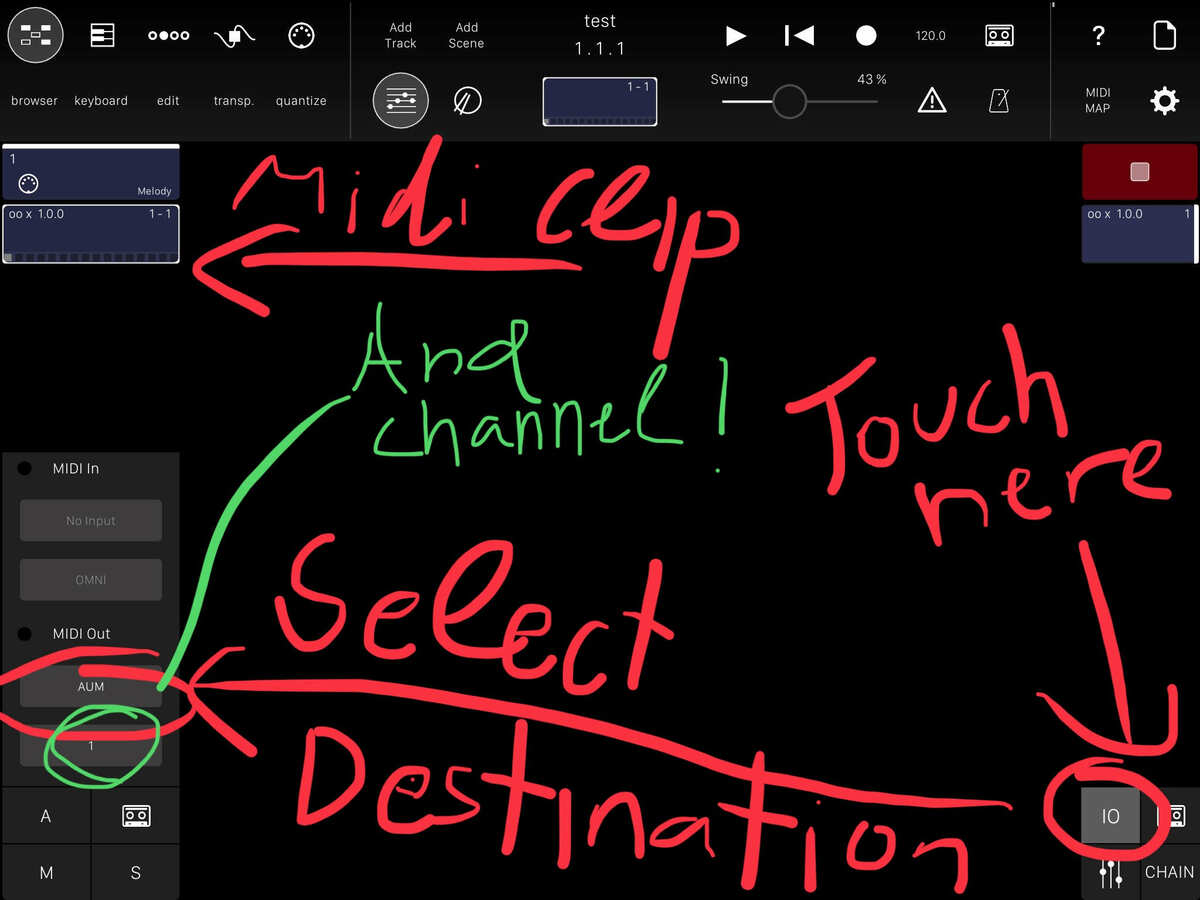

First launch AUM and add ModStep as audio . For now it’s used only for easy switching from AUM to ModStep . Now add an AU synth and select AUM as in and midi channel 1

Now switching to ModStep, we create a clip. We can add more vertically and all the clips will output to the midi port selected and channel. In this case it’s AUM in midi channel one

If you want to add more synths in AUM , you must select AUM as midi source and set a dedicated midi channel (up to 16 channels)

In ModStep you can add a track and select AUM as destination port ,at the channel you’ve set in AUM.

Hope it makes sense. But I would try simply adding the AU’

Great. I'm pretty well versed in Modstep if you have other questions feel free to tag me.

This is amazing thank you. And remind me, why do you do this?

Because of :

Plus , I don’t think ModStep can expose AU parameters.

It’s a known iOS specific bug, the devs are working on it (hopefully with midi routing and automation stuff)

Can’t make a video ,maybe some screenshots, but is there something specific you want to ask ?

Hmmm, just a general curiosity about the ‘gotchas’ which always are unexpected and left field...

Well, now that I think about it, since you can’t record Au automation from the UI do you need to scroll through a list of AU parameters and pick the one you need to draw? i would assume so. Some synths and fx have tons etc.

+1 for genome

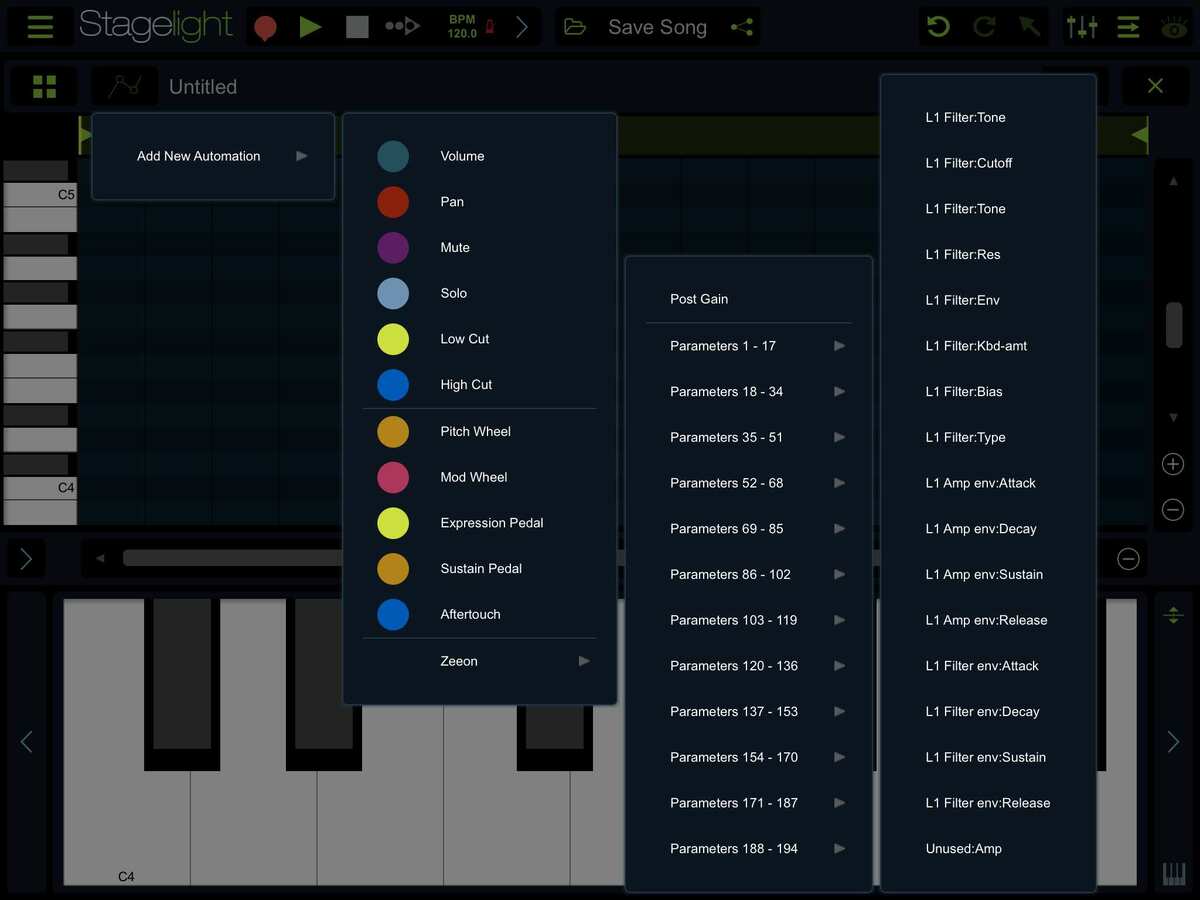

First picture shows how Stagelight lists the parameter. Nice and clean

Second picture shows a filter automation for Zeeon’s OSC1. I used the brush for the first half and the line tool for the second. Also I selected some points which can be deleted.

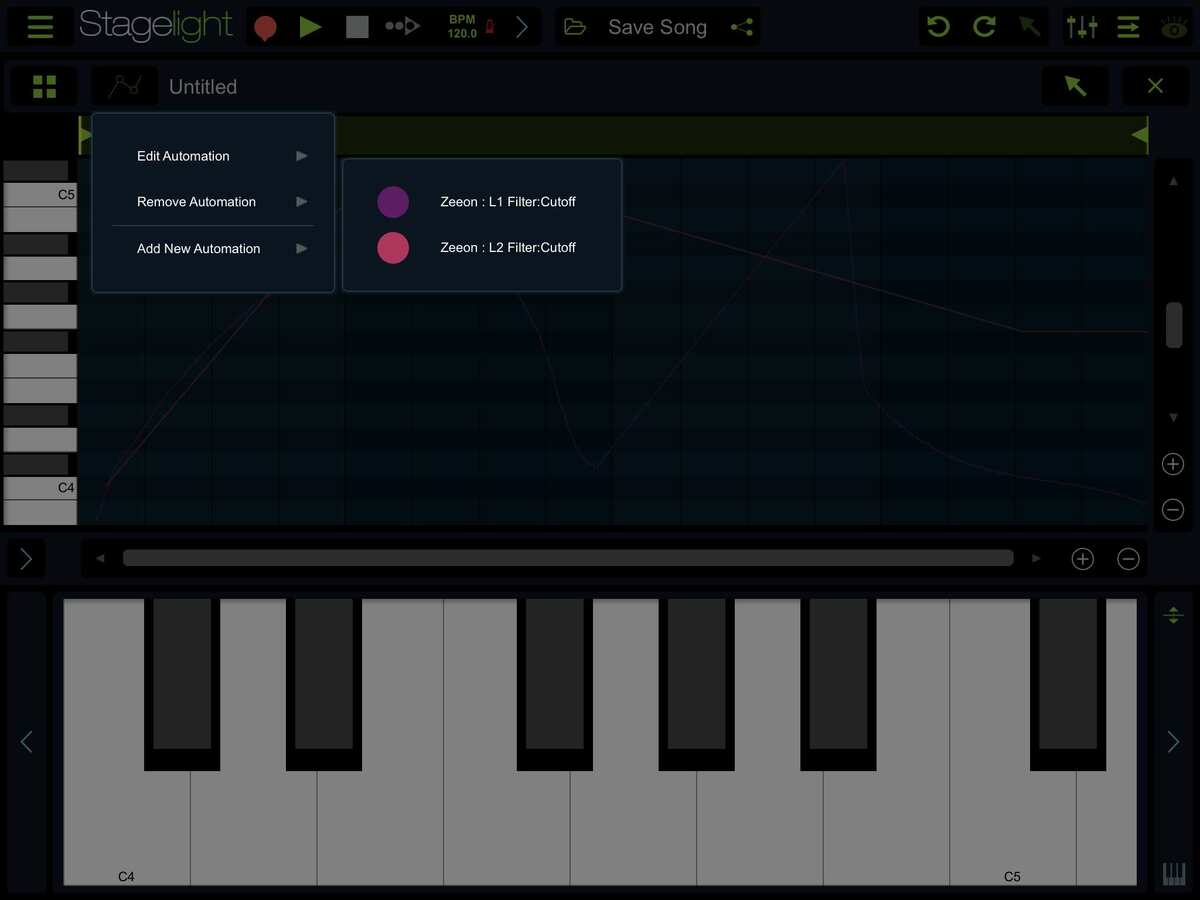

Third picture: I added an OSC2 automation by double tapping & making linear points.

Finally you can select to edit an automation or remove it

Although I couldn’t find a way to copy/paste or duplicate automation...

Hopefully there will be a “0 to 100“ video from Stagelight YouTube channel ,explaining automation, soon!

Thanks for the shots! The param list layout and the fact that it does have line and brush do seem quite nice.

I recommend Modstep too, with either AUM/apeMatrix or Audiobus3 to host the AUs.

We'll get an AU midi clip launcher at some point.

Another one worth a look is LK: Ableton Live controller. You can actually sequence apps on the ipad instead, like Modstep. although I've only had a quick play and think Modstep still has the advantage, but the devs recently added support for midi out onto the device and not just to Live.

STAGELIGHT .

Genome looks great, can’t believe I missed it. Will look at the videos , any user here could share more info ? Any bugs, missing stuff ?

Before Gadget, I used it a lot. Been in touch with David Wallin a few years ago to make v1.1.3 run solid both as a clock master and slave with many different hardware sequencers and groove boxes "speaking different MIDI".

It's one of the few clip/pattern based MIDI sequencers that also have a straightforward song mode and allow for spontaneous MIDI clip launching.

A unique feature till today is the way you can enter notes and chords.

In the pattern editor (piano roll), you can open the keyboard, play all keys of the chord so the keys are shown in red, then tap on the notes grid to place the chord(s). Never been faster to place the same chord at arbitrary positions. The same works with an external MIDI keyboard: Even an un-trained musician can search for the right notes one-by-one and when found, hit the right position on the piano roll.

Some controls cannot be mapped to midi commands (like transport and recording).

I solved this by attaching a BlueTooth keyboard and, using the iOS acessibility Switch Control, let different buttons "tap" transpot controls.

Thanks

Transport would be cool , but for recording a clip I really need a dedicated button.

When recording a clip ,do you have to pre-set the length like ModStep or its automatically ‘growing’ like Stagelight?

Also can MidiCCs be recorded real-time ?

This I would like to see. Were you shown the way or did you dig your own well?

This I would like to see. Were you shown the way or did you dig your own well?> @Korakios said:

So why has Genome (mostly) died off? Simply unfashionable/old-looking? Sounds as though it was never fully surpassed insome areas....

One word: Family 👨👩👧👦

A bit left field maybe, but how about KRFT?

I don't know if it's possible to do exactly what you want, but it looks like you'll be able to build a screen full of loop triggers, controllers, etc and have access to everything you want on one screen.

Just a thought...

You have to pre-set the length, Genome is strictly pattern-based and recording actually happens in the piano roll page. Of course you could always start with a super-long pattern (up to 128 bars per clip) and re-adjust the length afterwards. This is non-destructive so shortening a pattern with notes still behind the range, these will be remembered - just not played.

Yes, like MIDI notes, CC are recorded in realtime, at the resolution defined by the currently set grid (1/2 to 1/128 resolution). They can also be edited afterwards, again with the current resolution on the timeline.

For controlling with hardware, I'm using a cheapo chinese Bluetooth game controller with one button DIY-wired to a foot switch. These are recognized by iOS in Accessibility Switch Control.

You could map it to the REC button which is a toggle: Press to record, press again to end recording and continue playing.

@rs2000

I’m glad to see another Genome fan. Also glad of the time you put in with Mr. Wallin on midi clock ins and outs. It’s smooth as butter using MTS as host for instruments and clock for start-stop.

Genome for me is one of those apps that hasn’t received many updates (version 2.03, tweaking Ableton Link 2 years ago) because it doesn’t need them.

Well I watched all Genome videos I could find, it seems a great app ,but I really don't like the GUI (SampleWiz era)

Thanks 😃

I've seen Genome being used in various modular synth setups, you can get an old iPad 1 and an Alesis IO Dock for around $100 already and v1.1.3 runs on iOS 5.1.1, that's not much to complain about

Yep I have the older version on my iPad 1 from 2001, and use it with my scope creamware setup. 2.0 is fun too, dudes synths are cool, a shoutout here to his bleep!BOX

Noah here 🤝

Greg.

Funny, I never think I hear about Creamware here, I still use my Sonic Core Xite a lot in my studio.

Though I use RME and Apogee with my iPad the Sonic Core (Creamware) is still my favorite sound card by far!

A few years ago Sonic Core talk about a iPad compatibility.

For now only windows works with those cards.

I’m running on an old tower, Windows XP (that’s the last OS it runs on for Windows I thinks) Runs on old Mac towers too I’ve heard - the noisy ones.

Cool thing on Windows, I can run scope projects into MTS, and move projects between that old tower and iPad