Audiobus: Use your music apps together.

What is Audiobus? — Audiobus is an award-winning music app for iPhone and iPad which lets you use your other music apps together. Chain effects on your favourite synth, run the output of apps or Audio Units into an app like GarageBand or Loopy, or select a different audio interface output for each app. Route MIDI between apps — drive a synth from a MIDI sequencer, or add an arpeggiator to your MIDI keyboard — or sync with your external MIDI gear. And control your entire setup from a MIDI controller.

Download on the App StoreAudiobus is the app that makes the rest of your setup better.

Nanostudio 2.0.1 update available.

AudioBus support, virtual MIDI IN, tons of AU related fixes, Mod Wheel, Sustain pedal support, save selection in audio editor, playable keyboard in pattern editor, velocity sensitive pads in Slate, native iPad Pro support,... lot of stuff

Full release info:

https://www.blipinteractive.co.uk/community/index.php?p=/discussion/605/nanostudio-2-0-1-has-just-been-released#latest

Comments

Exciting! I can’t quite tell from the notes... maybe I read them too fast... but are we able to record midi plugins with this version?

Woot!

"Fixed MIDI input detection problem for some MIDI FX AUs (eg. Step Poly Arp)".

Looks to be... fingers crossed.

Ah interesting. I didn’t think it was a detection problem so much as it was just never added.

Directly not, but if you put MIDI Tools Route plugin on track you want to record (after midi plugin which output you want to record), you create second track in sequencer, switch to this track (by default NS records midi on currently acive selected track) and hit play/record it you get recorded all midi output from plugins on first track

btw one thing not mentioned in list, but it's direct result of AB support - you can load NS as IAA generator in AUM or ApeMatrix

Got it! Sounds like a good workaround until we get the real thing.

Great

Looking forward to the ipad pro optimisation too.

Woah

Has the update shown up for anyone else?

it needs some time, apple is sluggish

Close on audio tracks yet?

It’s out now

Is this final solution? No proper support for recording from midi plugs planned?

The roadmap around release mentioned several things (IR reverb, audio tracks, iPhone support). It's obviously quite behind... can you tell more or less when can these be realistically expected? This year, next year? Priorities in development? I know sharing this info never ends well... but bleep was always open about it, so maybe you can share some...

Thanks in advance!

It's up in uk store...

It’s up in Dallas

My assessment also.

Sweet!

@recccp, I’m sure Dendy will give a more detailed answer, but in short, from other posts of his... Yes, recording of midi AU FX without a workaround is planned, but I think after the iPhone version and Audio Tracks. Both of which are somewhat behind schedule but (In Dendy’s estimation) likely to still make it some time this year.

iPad Pro screen optimizations look outstanding on my 13”. I have waaaay more screen real estate than before.

You forgot the most obvious and required thing, @deny

NanoStudio 2 by Blip Interactive Ltd

https://itunes.apple.com/us/app/nanostudio-2/id1112601015?l=en&mt=8

In your case, I did not expect this

And to have this locally too:

There are many more improvements, fixes and tweaks in this version. Please visit the Blip Interactive forums or refer to the in-app user manual for the full list.

So much nicer to use with the correct ui scaling now

@dendy Do you know if anyone has requested 'undo' for patch change in Obsidians Perform view?

It's easy to 'loose a patch' that hasn't been saved when using the arrows in perform view close to the patch name meaning the 'edits' are lost when changing the patch

That's good news, thank you!

So putting NS2 in the audio out slot of AB3 and sending audio in does it actually achieve any signal as NS2 doesn’t currently have audio tracks. Is this pipeline for midi only ?

Fantastic update! Can't wait for iPhone compatibility.

Sampling?

you can sample into Obsidian sampler or into Slate pad from audiobus (just open recording screen and "audiobus" will be set as recordinh input - if you have enabled "monitoring" you hear what is comming into NS from AB in this screen

For example you can sample IAA synths this way - you send notes from NS into IAA synth using Nanostudio's "Externá MIDI track" instrument and sample back to NS Output from IAA loaded into AB input slot

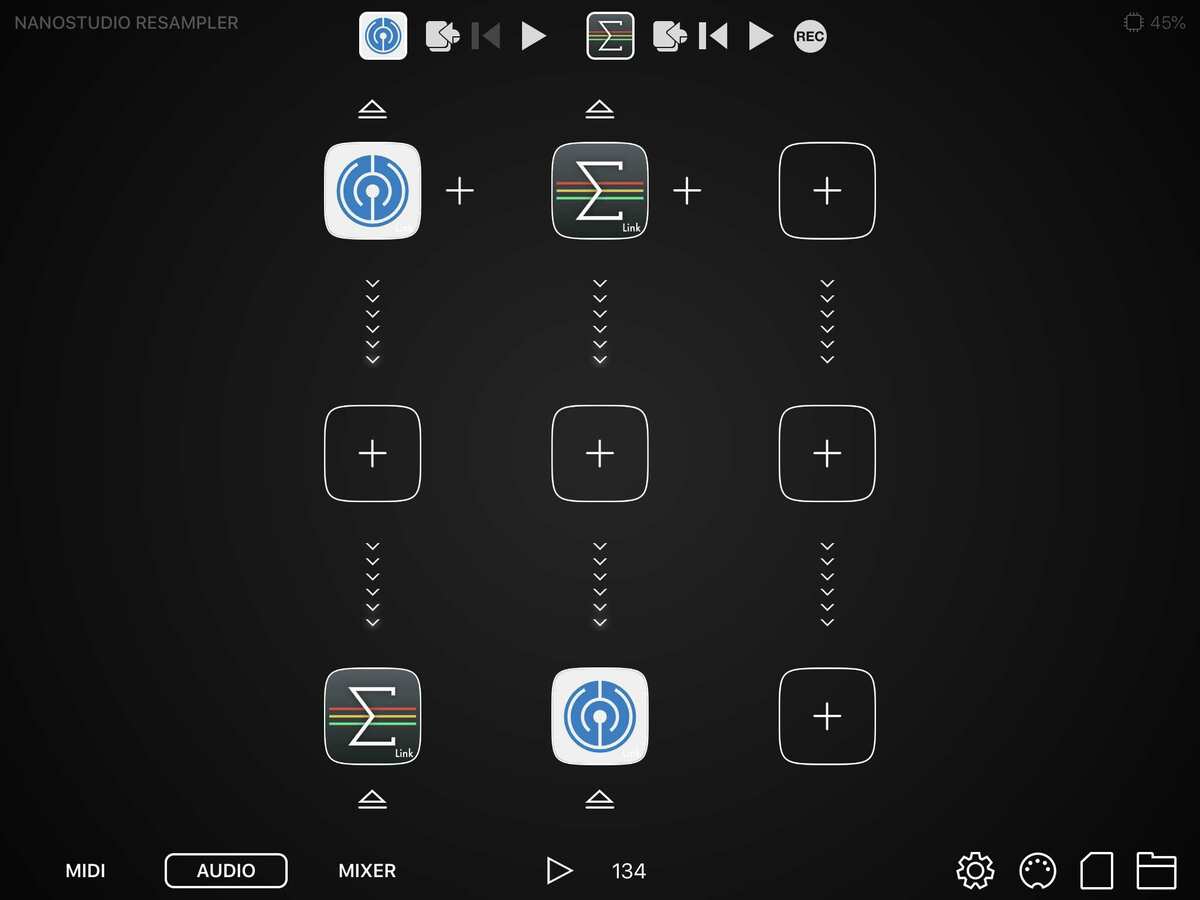

my favourite use case of AB is realtime resampling of NS output... I have saved AB preset which routes NS -> AUM -> NS (AUM is there because you cannot route directly NS -> NS in AB, plus i want also hesr what is playing ). In AUM i use bus send for monitoring... In NS i can then at any moment sample into Obsidian / Slate what is NS currently playing ..so realtime resamplimg (i thing @Samu wanted this possibility ?)

). In AUM i use bus send for monitoring... In NS i can then at any moment sample into Obsidian / Slate what is NS currently playing ..so realtime resamplimg (i thing @Samu wanted this possibility ?)

AB setup (saved as AB preset)

AUM setup

NS recording screen - ready to sample what is Ns currently playing

(in this case, don't forget to disable "monitor" in NS recording screen instead you get feedback - monitoring is in this configurstion handled by AUM

You can monitor/sample from an audiobus input source, by opening up a Slate track and then choosing a pad and tapping on 'sample' icon -> and REC which gets you to the recording window where you enable monitoring. In an Obsidian track, select OSC Type -> Sample - Rec Sample.

Btw my totally most favourite feature in this update is something, what probably not many people even notice, but for me it's huge workflow speed up. It's this:

save selection - it allows you save selected area and then jumos back to editor... with turned ON quantisation grid it is really cool to split some longer recording to smaller pieces with same size