Audiobus: Use your music apps together.

What is Audiobus? — Audiobus is an award-winning music app for iPhone and iPad which lets you use your other music apps together. Chain effects on your favourite synth, run the output of apps or Audio Units into an app like GarageBand or Loopy, or select a different audio interface output for each app. Route MIDI between apps — drive a synth from a MIDI sequencer, or add an arpeggiator to your MIDI keyboard — or sync with your external MIDI gear. And control your entire setup from a MIDI controller.

Download on the App StoreAudiobus is the app that makes the rest of your setup better.

VisualSwift Public Beta

https://testflight.apple.com/join/9KePAn4p

I hope to gather in this thread discussions related to VisualSwift

Would be great to know what works, what doesn't, what's missing etc

The plan is the following:

1 - Host AUv3 plugins and connect them together.

2 - Create synthesizers and effects from low level components.

3 - Create Audio Visualisations using low level components.

I've struggled with stability in the past but I think it has improved a lot in the last couple of months.

Stability is a big priority before moving on to the low level components.

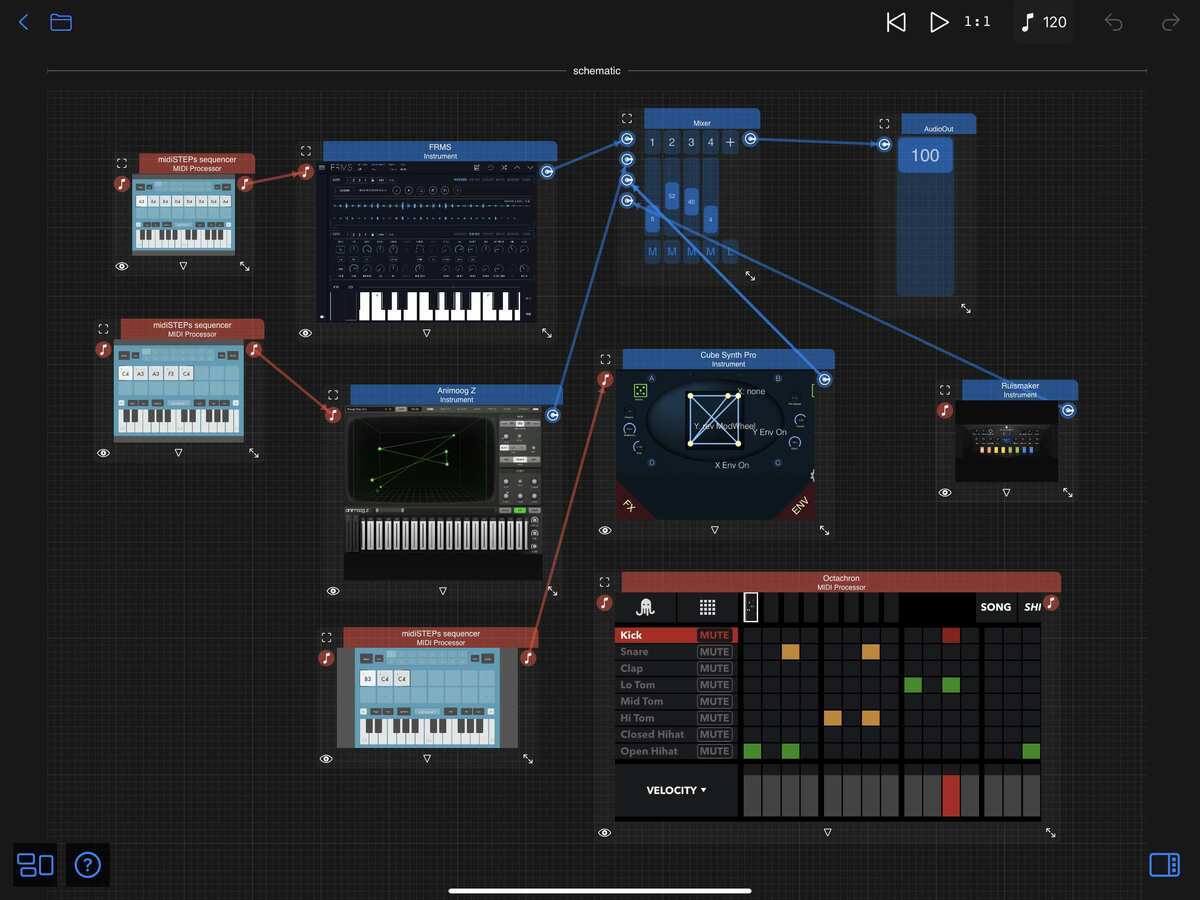

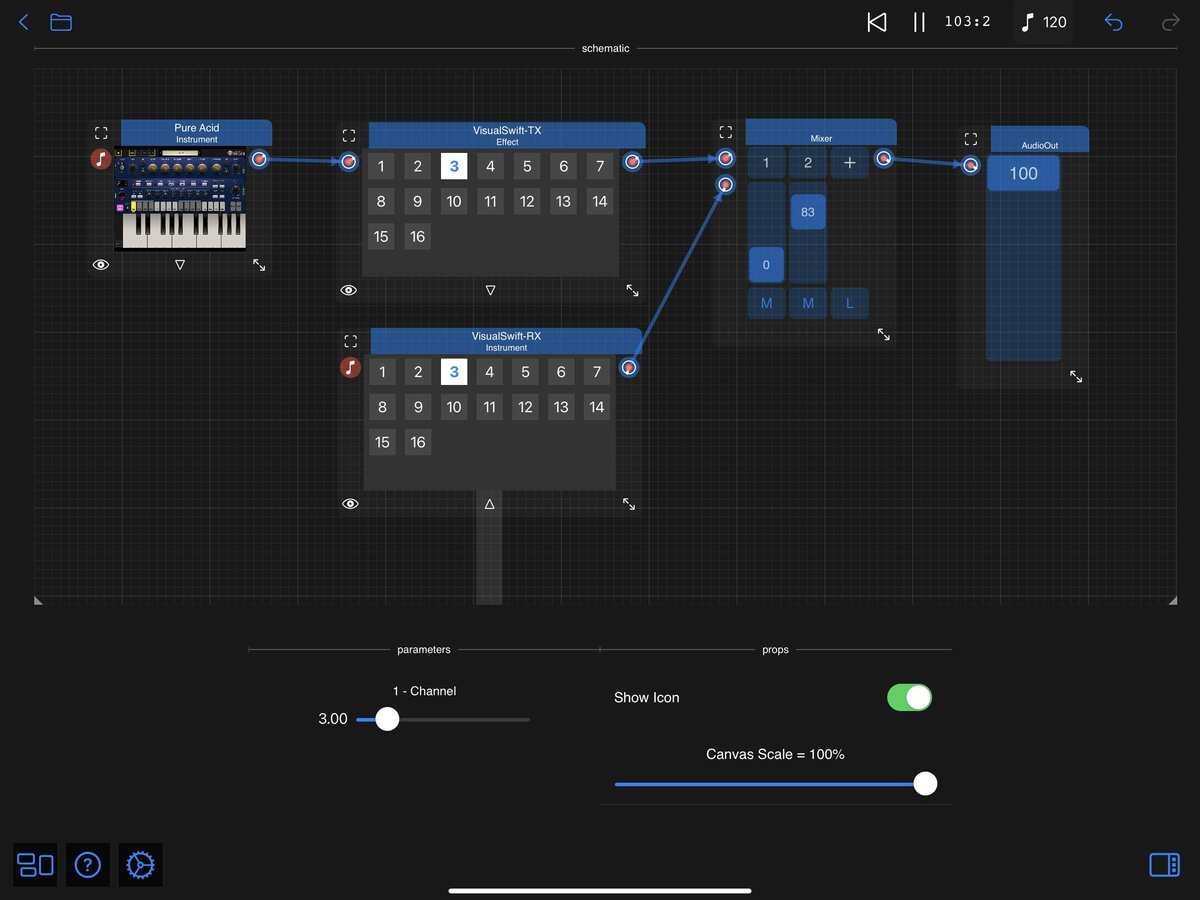

Here's an example screenshot:

Comments

An issue for me right now is that some midi sequencers (notably the Rozeta suite) present as AUv3 Instruments rather than midi processors, and VS doesn't seem to have the ability to route midi output from AUv3 instruments. There are other places this would be useful as well (Animoog Z and Drum Computer are both instruments I'd like to be able to capture midi out from).

Thanks. I've just uploaded the latest TestFlight v1.2.7 build 48, Bram Bos Rozeta Suite instruments should now behave as if they were MIDI Processors.

I think the Rozeta Suite are a bit of an exception, they had to be implemented as Instruments because there were no MIDI Processors at the time. I'm basically removing the Audio Output ( as far as I understand it's not needed ) and exposing the MIDI Output.

I didn't fix the issue for Animoog Z and Drum Computer yet, I think they're probably examples of a more general case of requiring both Audio and MIDI outputs.

It has come a long way with your recent improvements, probably the most frequently updated app on my iPad the last month or two!

Something that I keep hitting when I use it is the feeling that I’d like to be able to manually resize the schematic. I know I can drag a component so that the schematic resizes but it would be useful to be able to manually resize in the same way as you can with the plugins. It can feel quite claustrophobic when you start adding plugins to the schematic.

Kind of related to this is that I keep getting to the point where I accidentally drag a plugin so its title bar is off the top of the schematic. I can’t see how to grab the window to move it once it is in this state.

Yes, I believe I heard as much from @brambos on these forums. That’s great!

Yes, for sure, there are many such AUs.

Anyway, thanks! I’ll keep following VSs development with interest. I’m kind of curious to know what inspired you to build VS. It’s an interesting and cool concept, but I’m curious about your target use cases and/or what problems you’re setting out to solve.

Cheers!

Hi! @Jorge : this is a really interesting app. Question: can one use multiple TX components to connect to multiple channels in, say, AUM?

Crazy timing as I was just thinking about this app and going back over old threads here for it. Excited to try out the newest iteration!

I've just uploaded the latest v1.2.7 build 49. Instruments now have a MIDI output connector when supported.

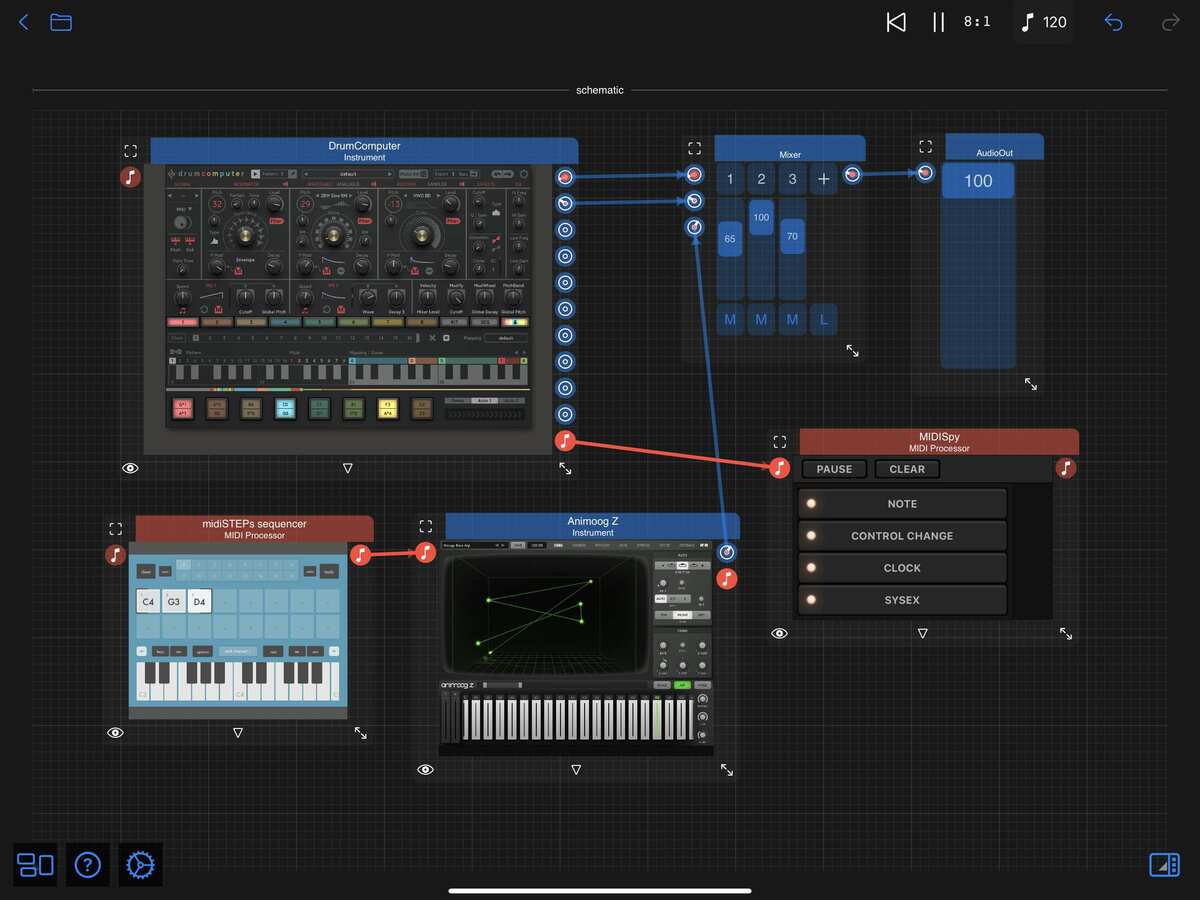

Here's an example screenshot:

In the example above the MIDISpy plugin doesn't work as it requires being connected on both sides. I'm starting to think about creating a more flexible VisualSwift MIDI monitor component that would work in this situation.

I'm interested in knowing an example where you'd use the MIDI output from DrumComputer so I can try it for testing.

I hope this works and that it doesn't break anything else.

It slipped under the radar for me but a big thing people should be aware of that is unique to this app is the Tx module. This lets you pipe audio out of the app to a receiver application “VisualSwift Rx”. I have only just noticed it so have not done any real testing but, for example, I was able to route the audio out of the app and into NS2 by adding the VisualSwift Rx app as an instrument in NS2.

@Jorge this overlaps with some of the conversation we were having in https://forum.audiob.us/discussion/48486/open-beta-wireless-audio-auv3. Are you planning on adding any options for audio in (assuming they are not already there and I just haven’t found them!) as it would be great to have this app as another proxy option for audio routing.

I like to send DrumComputer's MIDU into the Klevgrand "drum" apps like Skaka and Slammer. But really you can even send it to a synth, sampler or even a piano app and you will hear interesting rhythm patterns.

The latest version 1.2.7 build 50 prevents components from being dragged outside the schematic area.

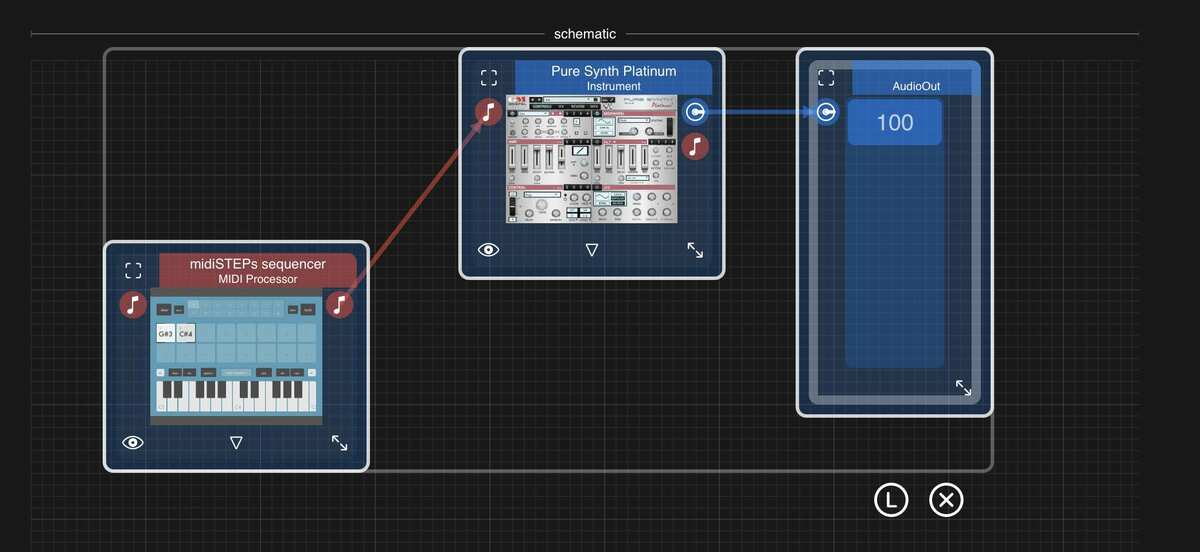

It should also work with multiple selections as in the following screenshot:

I didn't implement the manual resizing of the schematic area yet, I need to let ideas settle first. I wonder if it should be possible to drag any of the 4 corners, also when it's manually resized should it stay manual and prevent automatic resizing?

Maybe a pin icon on the bottom right corner that when enabled would keep the size fixed and when dragged would allow manual resizing. When disabled it would go back to automatic resizing.

Yes, this sounds like a good idea. If the schematic had been manually resized then it would be preferable for me for it not to automatically resize if I then moved some of the components around.

It would be useful to have a kind of “zoom out to show the whole schematic” button somewhere on the screen (maybe long press on the existing one in the bottom left?). If you accidentally zoom too far in on a component then you can get into the situation where the schematic can no longer get touch focus and you can’t zoom back out again.

Hi

Sorry to sound a little stupid

What advantages do I gain by using this app

What user examples could you provide of having this app

It looks exciting, a new approach, but just wondering what the new opertunities are with this

Many thanks in advance

I have followed that other discussion, it seems to me a very interesting project and also a lot more complex than the VisualSwift TX/RX components. My implementation only works between two apps on the same device and is actually very simple: the main app and the AUv3 extension both share a container, the main app writes frames to the shared container as soon as they arrive and the extension reads frames as soon as they're requested. I think iOS is doing a lot of the hard work behind the scenes like preventing multi-threaded race conditions, making sure both apps use the same buffer size etc. I was surprised how well it worked ( fingers crossed ) due to it's simplicity. Actually for me it works well for buffer size = 1024 but not so much lower sizes.

Interesting way of implementing this would it be difficult to implement the Tx module as a third plugin so we could, for example, use it in AUM to route audio into VisualSwift (or another host) by adding the VisualSwift Rx plugin to your schematic?

would it be difficult to implement the Tx module as a third plugin so we could, for example, use it in AUM to route audio into VisualSwift (or another host) by adding the VisualSwift Rx plugin to your schematic?

Yes, definitely, the same system can be used to create the RX component inside VisualSwift and the VisualSwift - TX plugin. You'd then even be able to use the VisualSwift - TX plugin and the VisualSwift -RX plugin to send audio between two other apps and bypass the VisualSwift main app altogether. I think that could be very flexible and useful.

Hello @Jorge and congrats for this ambitious project.

I have not joined the Test Flight yet...but I am very interested for the 3rd part of your plan.

Could you tell us a bit more...if possible.

Here's a video that gives an idea of what I have in mind:

https://youtube.com/watch?v=eW0nlJ6EoW8&t=412s

The idea is to use Apple's incredibly powerful metal technology and Metal Shading Language. As the metal code runs dynamically it can be changed inside the app. The idea is that inside VisualSwift you're working with visual components in schematics that get automatically converted to MSL code and run in the GPU.

Very interesting approach! I know a lot of mobile artists in the Audio Reactivate Visual scene...that will find this app/tool very useful!

It's a territory that no one is seriously exploring in the iOS platform (except LK's Visual Synth attempt), at the moment.

I am in 🙏✊

This is something I intend to try, the only possible issue is performance. The idea is that each instance of the VisualSwift-RX plugin would have it's own unique name like VS-RX1, VS-RX2 etc. Inside the Main VisualSwift app the TX component would allow you to select which RX to transmit to. I guess this is probably similar to the way Atom Piano Roll works for MIDI tracks allowing access to other instances of the plugin. Thanks for suggesting it.

Great, I'll try this, thanks.

This is another good reason to support NDI for broadcasting the AV output to external devices/displays and other computers as it's built for this process and can then be combined with other sources.

I would never use an ipad on it's own for this, but having an extra source from the ipad to combine with my laptop/desktop would be very handy.

This is very similar to TouchDesigner approach which I use on desktop.

I'm not sure how to answer this from a musician's point of view. As a coder my brain works visually and also I have the strong need to always simplify problems as much as possible. When I want to connect a MIDI track to an Instrument, I need to see them as objects and simply drag from one to the other. When I want to mix two audio tracks, I want to drag from two outputs to the same input. Side chaining ( if that's the right term ) always sounds like something complicated but it's actually not when you're working with a Visual schematic. If someone sends you a screenshot of their VisualSwift schematic, you'll know exactly the configuration they're using and you'd be able to replicate it yourself without any further explanation. I dream of creating my own music, I'm trying to create the app I'd find easy to use, in the process I might never have enough time to actually be any good at making music. I find the way this forum connects the musicians with the developers really valuable.

I do realise that Visual Programming is not for everybody, you can find it in other apps like Blender, Unreal, Touch Designer, Rhino and Grasshopper etc etc. It tends to be associated with complexity so only a small minority likes it. I think quite the opposite, it allows you to separate the complexity into much simpler and easier to solve problems.

I think the real challenge is to provide good documentation.

Yes, this. I might also send midi output to LK or Atom so I can save and playback patterns as clips

TestFlight v1.2.7 build 53 is now available.

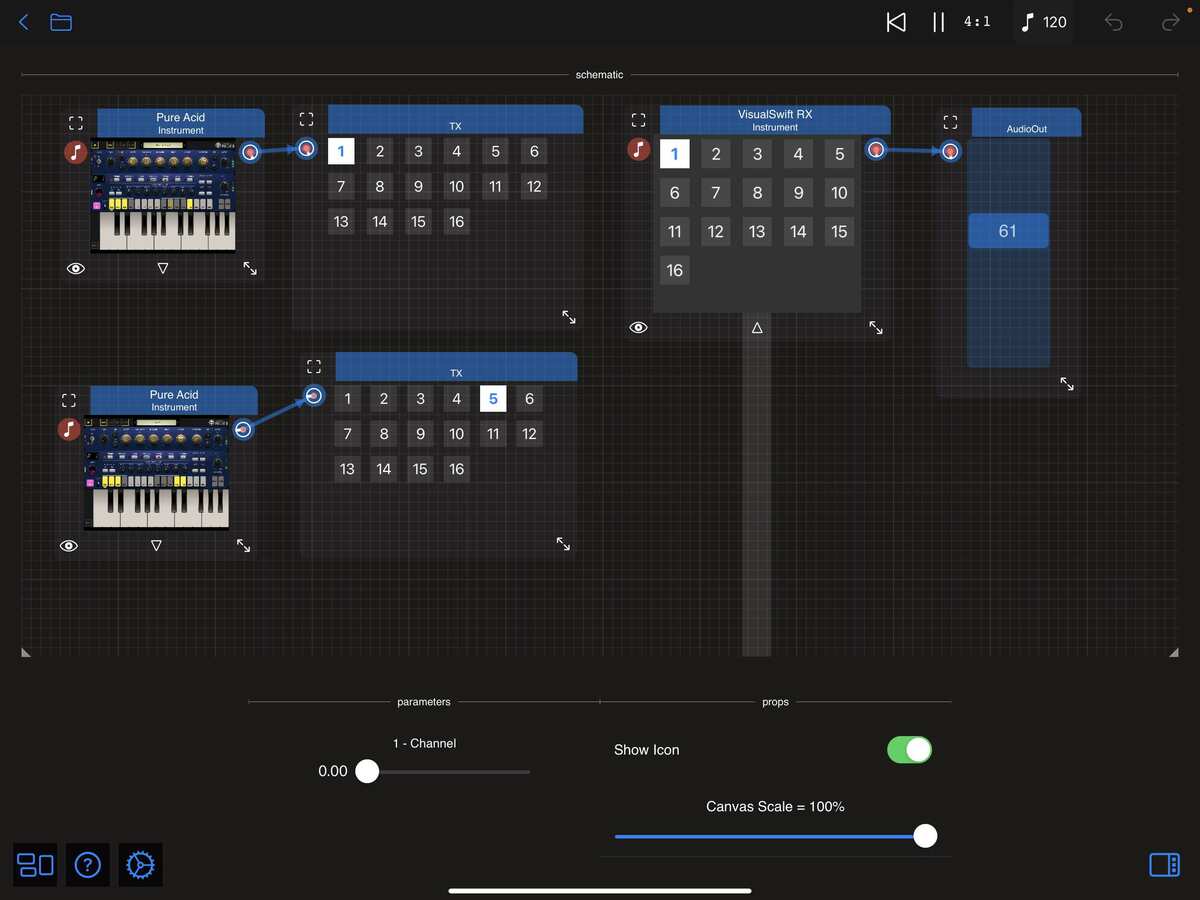

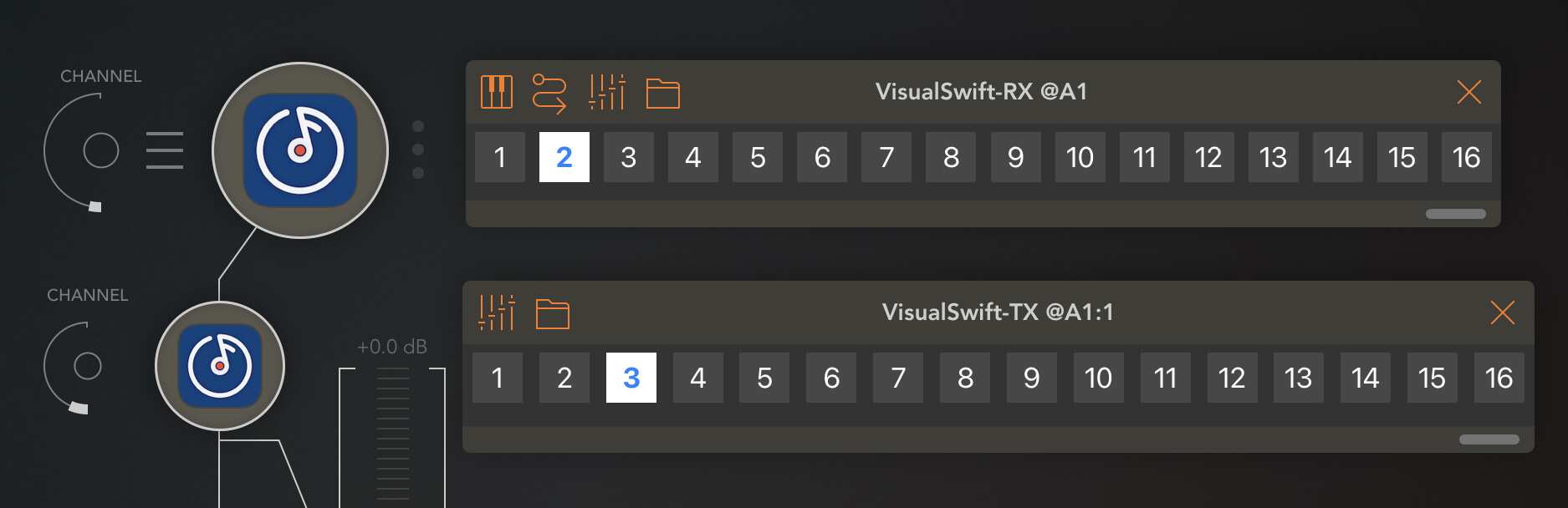

The TX component can now transmmit to one of 16 channels.

The RX plugin can now receive from one of 16 channels.

Here's an example of one RX plugin inside AUM:

And here's an example of a schematic that sends one stream to AUM's channel 5 and another to an RX plugin hosted inside VisualSwift through channel 1.

The RX plugin's channel index is a parameter which allows it to be controlled by MIDI and automated.

Wow, that was quick! Look forward to finding some time tomorrow to test this, thanks Jorge.

Wow. Thanks for this. Perennially game changing.

v1.2.7 build 55 is now available with a new VisualSwift-TX AUv3 plugin.

The VisualSwift-TX plugin runs as an Effect.

Here's an example using both the transmitter and the receiver:

Here's an example inside AUM receiving on channel 2 and transmitting on channel 3:

I didn't try it but you should be able to stream between two other apps without using the VisualSwift main app.

The previous TX component has been removed from the VisualSwift library and menu as it is now redundant.

To achieve the same functionality you can now host the new VisualSwift-TX Effect.

I think you've found something Drambo can't do.

This might work like a "wormhole" between DAW's, I think. I just want to create tunnels

of TX/RX combinations just to see what's possible. Like using the audio output of an IAA app in a DAW that doesn't allow IAA apps.

Dr. Peter Venkman : Human sacrifice, dogs and cats living together... MASS HYSTERIA!

Just starting to test a few things with the Tx and Rx plugins. I was able to pipe two separate sequenced instruments from AUM into two NS2 tracks using two Tx plugins in AUM and two Rx instruments in NS2. I haven’t measured the latency from AUM to NS2 but it was not great enough for me to notice.

The really good thing is that I shut down NS2 and AUM and then restarted my AUM and NS2 project and the plugins reconnected without intervention. This is a biggie in my opinion as it removes a lot of the friction from integrating this type of plugin in a workflow.

Going from GB guitar to Loopy or AUM the sound is distorted.

I was able to drive 3 instances of Obsidian from AUM by pointing 3 Atoms at separate tracks and used 3 Rx in AUM and 1 Tx on each NS2 track. I closed both AUM and NS2 then started NS2 (so its MIDI input port was visible), then the AUM session. Hit play and it all started playing.