Audiobus: Use your music apps together.

What is Audiobus? — Audiobus is an award-winning music app for iPhone and iPad which lets you use your other music apps together. Chain effects on your favourite synth, run the output of apps or Audio Units into an app like GarageBand or Loopy, or select a different audio interface output for each app. Route MIDI between apps — drive a synth from a MIDI sequencer, or add an arpeggiator to your MIDI keyboard — or sync with your external MIDI gear. And control your entire setup from a MIDI controller.

Download on the App StoreAudiobus is the app that makes the rest of your setup better.

VisualSwift connecting to other apps.

I wonder which is the best technology to use to route audio from one app to another.

Inter-App Audio would seem to be the best but as it has been deprecated in iOS 13 it could disappear at any point.

I think deprecated basically means don't use from now on and will only be available for a period of time to support older apps.

I've seen mentioned a few times that the replacement for IAA is AUv3 although that doesn't make complete sense to me or maybe I'm missing something.

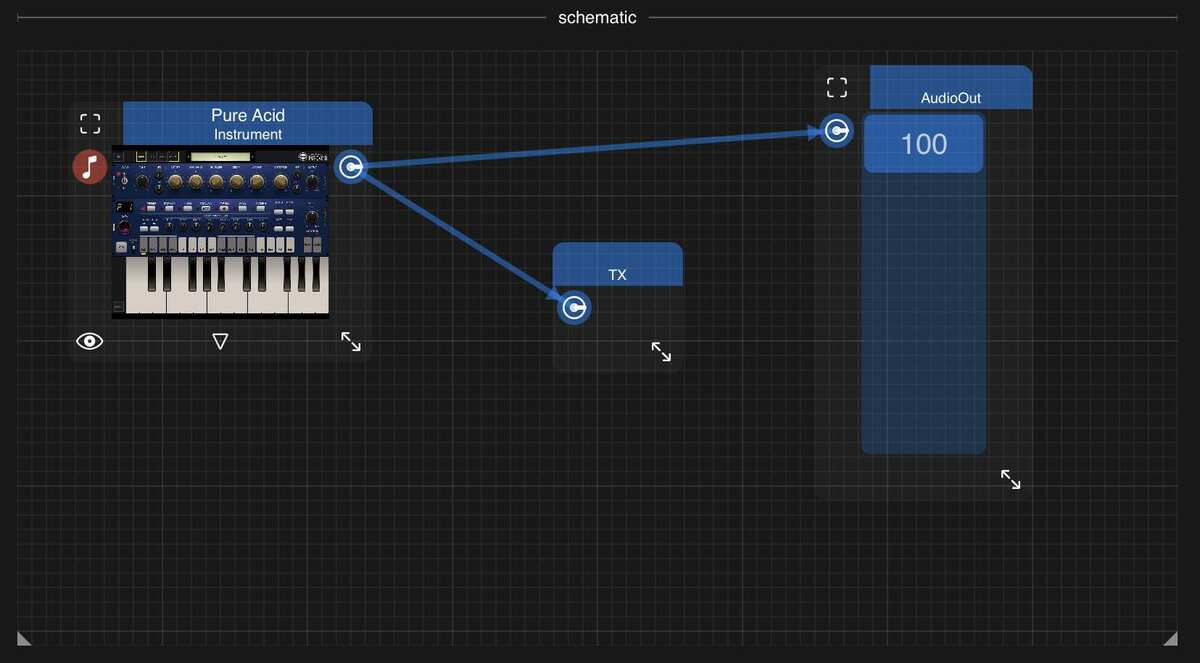

In the latest VisualSwift I've implemented a TX component to send audio to other hosts.

Whichever audio stream is connected to the TX component is sent from VisualSwift to a contained AUv3 receiver plugin.

Here's an example:

And here's the receiver hosted inside AUM:

The audio frames are sent through a shared container which is the part that doesn't seem ideal. It works well for me with frame size = 1024 but not so much lower. Is 1024 acceptable?

My question is if there is another recommended technology that I'm missing or another way of using AUv3 for this purpose.

Thanks

Comments

I cannot really comment, but think that routing audio between Apps is only possible with IAA or some other protocol - we have Apps that send audio over WiFi to other iDevices.

AUs can only send audio within a AU host like AUM, and the routing would finally be done by the host.

But I have no idea how to implement this 😅

Hopefully, one of our many developers will reply...

Thanks @tja, I think you're right. I'm starting to think that Apple is deprecating a technology without having a proper replacement. Maybe IAA is the way to go, if there isn't a proper replacement maybe it won't be removed so soon.

I think that it will stay for a long time.

It will just receive no updates or fixes anymore.

@Jorge . Could you please please change the word "hosts" in the title to "apps"? People tend to interpret hosts to mean other computers. You're asking about connecting to apps, which can be AUv3 hosts.

I think the answer would be “Audiobus” (see the name of this forum.)

When the deprecation happened, Michael said that he would try to find an alternative transport too keep audio communication flowing. The pre IAA Audiobus was implemented on sockets, I believe.

I could be corrected since I’m only mildly dangerous as a programmer 😉.

@uncledave no problem, I've changed it.

I'm loving that sentence 😅😅😅

Would be great on a T-Shirt too 😅😂🤗

I'm slowly arriving at the same conclusion. I've read that AudioBus is easier to implement than IAA, might be preferred too.

https://developer.audiob.us/doc/_integration-_guide.html

Wait…is Audiobus a different technology from IAA? I thought they were the same.

No, AudioBus was the original - before Apple created IAA.

But AudioBus currently uses IAA, IIRC.

But in case IAA vanishes, AudioBus will continue to work 🤗

It's really interesting to re-visit the history of AudioBus as told by @Michael.

https://atastypixel.com/thirteen-months-of-audiobus/

TL;DR @Michael tried to use Virtual MIDI's SysEx protocols to connect apps like "audio cables" over MIDI and got it to work.

In the words of Bobo Goldberg in a comment to @Michael:

Then he got dozens of developers to implement the interfaces and the rest is history. We are all fortunate that Apple did not shut it down with an API update. The IOS Music Production marketplace exploded. then...

AudioBus led to Apple's IAA API's

Then IAA issues lead to AUv3 to improve stability (the 340MB RAM limit had a stability intent).

This TX/RX connection in Visual Swift probably relies on a specific Apple Audio API under the hood. The thing that gets out of control quickly is screen management between so many apps. It becomes a new "sequencing" function to open the right app at the right time to keep up with the "clock".

@Jorge

Just installed the public version. The beta version with the recorder was really crashing a lot. In fact, I had to just delete the test projects that had the recorder object because they were crashing the public install.

I like the TX object to send audio to a daw better anyway. I did a quick fresh test and it appears to be working well.

I would think one viable and long term solution would be to integrate network audio to go from host to host or device to device over ethernet or wifi (or app to app). For example like on desktop this can be done with NDI (audio only or video+audio).

It would be possible for any host to integrate NDI audio in theory (the SDK is available).

But I'm not a developer so I don't know how easy this is.

although the only thing is, other iOS hosts would have to integrate NDI too, but at least it would be very useful to stream audio to and from Desktop (to me this would be much more useful than iOS host to host anyway.

I removed the recorder a few versions ago as it wasn't ready, since then the audio engine has improved and the recorder didn't catch up with the new system.

I thought that providing a way to stream audio to another app was probably a higher priority and would allow recording inside another host.

The TX/RX system has been working well for me with frame size = 1024.

The way the TX/RX works is quite simple. The AUv3 RX is implemented as an extension contained inside the main app and they share a container. The main app saves frames to the shared container and the AUv3 RX reads them. When running in debug mode attached to Xcode I don't see any saving to storage so it's quite fast but some coordination has to be happening to prevent issues with reading and writing at the same time.

I've tried more sophisticated systems with ring buffers and saving to memory mapped files but while trying to optimise it I noticed that the simplest method worked the best.

The more sophisticated system was very flexible with writing and reading with different buffer sizes but again it seemed to be unnecessary because when you change the buffer size in one app, the other app automatically picks the new size.

So in conclusion, the TX saves a frame at a time with the given size as soon as a frame arrives and the RX reads a frame each time it is requested one by the other host. Apple's Audio Engine seems to be doing a lot of the hard work timing already which did surprise me.

Networking audio would be great, I'm just always conscious of latency for when playing live.

I intend at some point to explore Apple's Low-Latency HSL: https://developer.apple.com/documentation/http_live_streaming/enabling_low-latency_hls

Any recent technology from Apple tends to be easier to implement inside iOS.

NDI sounds like one to research if it's already proven to work well on desktop. Thanks for the suggestion.