Audiobus: Use your music apps together.

What is Audiobus? — Audiobus is an award-winning music app for iPhone and iPad which lets you use your other music apps together. Chain effects on your favourite synth, run the output of apps or Audio Units into an app like GarageBand or Loopy, or select a different audio interface output for each app. Route MIDI between apps — drive a synth from a MIDI sequencer, or add an arpeggiator to your MIDI keyboard — or sync with your external MIDI gear. And control your entire setup from a MIDI controller.

Download on the App StoreAudiobus is the app that makes the rest of your setup better.

Comments

I cant keep up with all this great stuff in here!!!!!!!!!!!!!!!!!!!!!!!

@david_2017 beautiful

@Intrepolicious your going to get divorced soon! 😂😂.

Beautyful

Took a moment to catch up. Loads of great inspiring stuff.

Sure thing Tony, I will surely try!

You may not be wrong!

Thank you!

Really love this thread so far, i really enjoy seeing the process behind your work and the processes and tools you are using

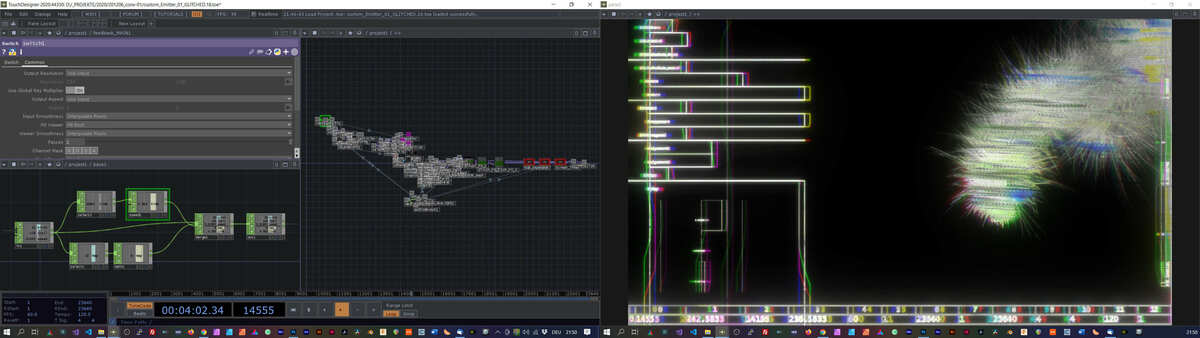

I hope that it is okay to try something slightly different here. This is not a screen capture, but an audioreactive patch that I've built in Touchdesigner, but the initial part of performing and recording was in AUM on my iPad:

Here is also some background information on this:

uberdrone is a series of études on spatial noise and feedback chains in urban environments.

the sonic material is collected via experimental field recordings such as ground velocity, impulse responses and ambisonic recording. afterwards all the collected forensic material is processed in a hybrid analogue / digital setup, which is recorded as a one-take performance. this final sonic material is the foundation for these audioreactive video setups.

Facinating

Thank you

A little side note: If you have any questions regarding the process behind this feel free to ask me

@vrtx_void Yes that is cool AF!!!!

I did not capture my screen recording of this..... a different track for me... more Lofi Trip Hop... wondering if I should finish it.

thanks, really glad that you guys like that

And I really like lo fi trip hop stuff! You should definitely finish it, has a great vibe to it. maybe more saturation ?

?

Finish it

Wow I love this so much!!

thank you 😄!

My largest iPad project by far. Mostly using LK in AUM but did a session in NanoStudio2 and exported stems as AUM (my iPad) never would have carried something this large without problems. Some live guitar that I definitely going to redo with a recording instead. Used LK for all midi cc as I felt it's not only take less cpu but also gives better control than a sine or whatever waveform one can use. Even used it for some strange "fills" from Drum Computer (remix option).

@onerez I think it sounds worth finishing. Was kinda disappointed when it faded it out.

Was kinda disappointed when it faded it out.

@vrtx_void Cool effect there, computer or iOS? Looks a bit like SunVox graphics

dude this is great!

@Pxlhg wonderful, atmospheric, psychedelic gypsy dreams! The hair effect at the end freaked me out though lol

Ha ha, thanks, sorry for the scare

Thanks! Glad you like it.

I really love Alexander Zolotovs apps, designs and his obsession with demoscene that we both share

The visuals are programmed in Derivative Touchdesigner and based on particle-emitter on GPU concepts by paketa12:

and noise feedback displacement glitches based on concepts by noto the talking ball:

The interactivity between sound and image is based on a custom audio analyzer that I've programmed myself, which maps certain frequencies to specific functions and processes that generate the velocity, intensity and overall generative aspects of the visuals:

> The visuals are programmed in Derivative Touchdesigner and based on particle-emitter on GPU concepts by paketa12:

> and noise feedback displacement glitches based on concepts by noto the talking ball:

> The interactivity between sound and image is based on a custom audio analyzer that I've programmed myself, which maps certain frequencies to specific functions and processes that generate the velocity, intensity and overall generative aspects of the visuals:

Wow, cool! Thanks for all that info. I used to do abstract stuff in Modo and Blender and then use AfterEffects as the collective end-station but it's always a lot to learn, but foremost: it takes some heavy computing, a resource I don't posses.

Decided to get lost in the deep end on this one:

I’ve got some catching up to do listening to this thread after a hard working week. I recorded this noodle over the weekend when tired, Gauss loop and some of those new IK fx...

https://soundcloud.app.goo.gl/jUyyZMVEtLeuWdBKA

Sorry for breaking the screen capture rule 😱

Been wanting to give this thread a go for a while. I've really dug a bunch of stuff I've heard, and love the idea of sharing little weird snippets of stuff. Thanks @reasOne for starting the thread.

I’m happy that you did share something.

There are so many germs in this thread and this is one of them.

Super nice sounds you have there anna very atmospheric piece.

Thanks @reasOne for starting the thread +1

Having a pretty productive day today:

Some lovely moments in here 👍

Buzzing off this 👍

https://youtu.be/zASsHxp8fus

First Drambo patches. Just a simple pad and some bouncy chords. Deluge sequencing Drumcomputer. Drambo is a creative beast for sure. Going to need to get a knobby as midi controller.

I love where this one’s going, I say finish it if you can 👍

First few minutes with the new Kymatica AU3FX series, and a lovely strumming technique.