Audiobus: Use your music apps together.

What is Audiobus? — Audiobus is an award-winning music app for iPhone and iPad which lets you use your other music apps together. Chain effects on your favourite synth, run the output of apps or Audio Units into an app like GarageBand or Loopy, or select a different audio interface output for each app. Route MIDI between apps — drive a synth from a MIDI sequencer, or add an arpeggiator to your MIDI keyboard — or sync with your external MIDI gear. And control your entire setup from a MIDI controller.

Download on the App StoreAudiobus is the app that makes the rest of your setup better.

Using Synthjacker to record any app on your iPad or phone

This comes with some caveats, but it might be a reasonable workaround until Synthjacker gets a few much-needed features.

You need hardware routing for this to work at the present time, so you need to be able to route both the audio and the MIDI in and out of the iPad or phone. In my case I'm using a Scarlett 2i4 which allows for MIDI to be routed in and out. I would imagine that adding virtual MIDI to SynthJacker would open this up to far more audio interfaces, allowing the MIDI to be routed within the iOS device rather than externally.

The main drawback is that in my case this method can only record in mono, but people with interfaces that have more inputs and outputs might find a way to record stereo. Maybe the iConnectivity boxes can do stereo. Another drawback is that this is not a pure digital chain, the audio routing goes via the interface's preamp so there is AD and DA conversion in the chain, as well as the chance of noise etc from the interface preamp.

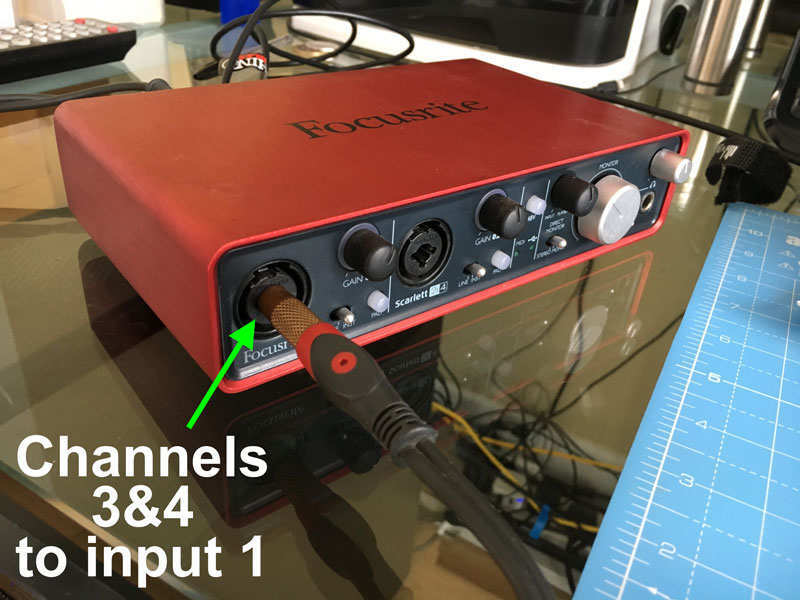

So here is the hardware setup:

I've connected the Scarlett2i4 to the iPad, and patched MIDI In to MIDI Out, and also audio outputs 3+4 into audio Input 1. SynthJacker does not let you choose which input it samples, so it defaults to the first one available, meaning input 1 must be used for audio coming back into the iPad or phone.

Then load up AUM, and add an instrument into Channel 1 - any instrument that can receive MIDI will work, whether AU or IAA. Set the output of this channel to channels 3&4 on the Scarlett (remember channels 1&2 must be reserved for the audio coming back in to SynthJacker)

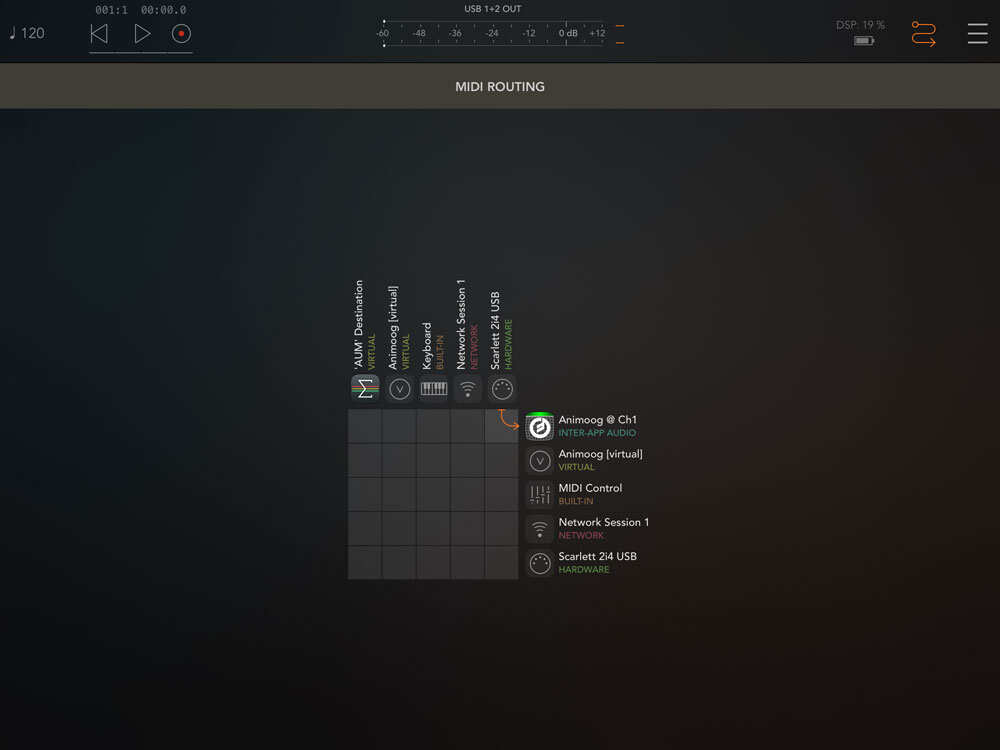

Go to the MIDI routing in AUM and route your hardware MIDI to the instrument on Channel 1:

And you can now use SynthJacker to auto-sample that instrument. Just set the MIDI and audio destinations to your interface.

A few things @coniferprod might want to consider:

Please add the ability to send MIDI internally on the iOS device so that the need for hardware MIDI routing is not needed, in which case any interface that can handle audio on 2 separate inputs/outputs can be used.

Also please give us the option to save the samples in WAV format rather than AIFF, WAV is far more widely compatible.

Eventually the ability to do all this via AudioBus would be really awesome, but this request is probably far more difficult to implement than the first two.

Comments

Are you sure you can't route outs 3&4 into in's 1&2? I do this on my PreSonus AudioBus ($99) and get stereo sample sets with SynthJacker.

I agree that "AudioBus" support would be a great update. It covers a multitude of use cases: MIDI, audio plumbing with hundreds of supported Apps we want to "clone" because they are getting abandoned or lost to us as IOS updates. AudioBus has better developer support than Apple and works for more devices so more potential customers with the update.

Thanks for sharing the video and technical details. "Auto-sampling" for IOS is great for those without access to Laptops or Desktops and you can't bet the prices here.

I tried Synthjacker recording VSTs from desktop and only got mono results using two Roland Duo Captures (one on desktop, one on iPad) and could only get mono results. It needs stereo! Seems to be an old school sampler storage saving ethic hangover (but dammit I have tonz of Zip disks ready and waiting for sweet sweet stereo samples ). Hope it gets it as I don’t have much use for mono samples.

). Hope it gets it as I don’t have much use for mono samples.

I sorry I thought I was recording bth channels since I had them cabled up. That should be a priority for the update efforts. The developer seems eager to please and capture more users.

@richardyot Thanks for sharing your method! I actually need to try that myself, since I usually just sample a HW synth or an Audio Unit instrument.

I do try to read all feedback and requests, and there is a development backlog I’m ploughing through as time permits...

My goal is to provide a cool and useful utility that fills the role of MainStage’s Autosampler or / SampleRobot on the iPad. With the help and support from you and many others, SynthJacker has taken big leaps forward in a short time after its release, so thanks!

@coniferprod thanks for listening, can't wait to see how this app will develop!

I made a complex piano using Loopback and every sample required trimming by hand in AudioLayer to reduce latency. Just a few milliseconds but enough extra silence that it requires trimming. It would be great if the app got attention to detect that “take off” from zero as the cut point when slicing the recording. Still, this is a good way to get good samples into AudioLayer.

We all wonder what an update for AudioLayer will bring to increase sales of the App.

Trimming the silences is on the roadmap, see here:

https://www.blipinteractive.co.uk/community/index.php?p=/discussion/comment/6372/#Comment_6372

It's true, trim silence is happening, and normalization too. Just needs a little more testing... Also one weird velocity related bug (AU only) was crushed in the process.

Awesome. I realized today that using the "AU Hosting" feature to sample one of my Apps creates "stereo samples". Using the external feature defaults to mono samples even though I select my stereo capable audio interface for the input. It might be coded to default to mono inputs to work with any iPad mic.

Using "AU Hosting" also seems to have some bugs related to chopping the samples by Velocity-Note and inserting the right sample into the right file. I imported a sample with 5 velocity layers and on some notes the samples get mixed... soft on top and loud near the bottom. Just a few in the whole complex of 100-200 samples but enough for me to switch to

external to avoid that. There was also some chopping issues but that seems to have improved with an update.

For the velocity variations I have switched to running SynthJacker at a single velocity and then importing that run into a specific layer and selecting the velocity range. I do this for 5 or so runs and get a well sorted set of layers.

So, fixing the start of the samples in the file should make me very productive.

I appreciate the developer joining us here to give us status. I have noticed he also participates on the NS2 Forum to status on updates and accept feature requests.

I know developers appreciate when we contact the directly via email but I get interested in

some Apps just by tracking the interaction between a developer and a user that I see here and grow to appreciate the true value of the App in that documented exchange of posts on a

forum.

There's a really hot app where the developer is interacting with a large group of new customers and accepting feature requests in the process and advising on product futures that encourage more to buy with confidence the "show stopper" issue is identified and will be addressed as a top priority.

We have also seen this attempt to work with the Forum go south and cause the developer to

back off and not return. Usually the bigger companies tend to avoid working at this level of

disclosure and commitment.

Audio Units are stereo - external synths are mono mostly because I couldn't figure out how to select some other input than the first! I'll look at it again later, but so far it has been beyond my reach.

The velocity problem should be fixed now in v0.4.0. It only affected the AU side of things, and was a total goof on my part. Sorry about that.

With v0.4.0 you should indeed now be able to trim the silence from the start of the sample. Or "silence" -- you need to do a bit of preparation to find the power level in decibels that is appropriate for your source. The default -54 dB should work as a starting point.

I can't promise to implement all feature requests, but I do evaluate them to see if they fit the overall concept. I'll also try to quickly fix what is obviously broken, but this app has been surprisingly difficult at times -- audio is like that by definition, and while I have been developing iOS apps for 10 years now, it has not been all roses. Especially the AU support was not as smooth as I would have liked (still couldn't get Apple's sequencer to work, so I'm playing the notes in a kludgy way). So I appreciate honest but courteous feedback.

@coniferprod thanks for the update, we appreciate your efforts

This is really good news. I'll get the update when it's available and do some more sampling.

I think the AU Hosting option will be my goto to preserve the audio quality. I'm also hoping the trimming is accurate so I can make 200-800 file sample sets and not want to do any hand trimming.

(Since I've already purchased) I can recommend you raise the price when these issues are all

resolved. When more of us start singing the praises of the App there will be more demand.

At $3 it's a no brainer even with bugs because the only alternative are desktop solutions.

When it starts to allow sampling any AUv3 it should be worth closer to $10 for the crowd that uses AudioLayer and NS2. There's always the possibility that AudioLayer adds this as a product feature or IAP extension but @Virsyn has not disclosed what they are working towards but I think they would get the most benefit by adding auto-sampling since AudioLayer can work as an Instrument or in the FX Slot of AUM to record anything AUM can load (AU or IAA).

As a next step for you, host IAA Apps would be investment protection for most long term IOS Users. NanoStudio 2 for example won't run any IAA Apps. Almost every IAA App also supports AudioBus and getting help with AudioBus is really easy and it's a very mature, well documented set of tools for connecting Audio and has additional features for MIDI as well.

Thanks for sharing this status. I'm excited to test the "AU Hosting" for volume and timing improvements. It something sounds good, I'll do the hand editing or look for tools to trim in batches. I think there are desktop solutions but likely only for wave file formats but tools to convert to/from wave probably exist. "Sox" was the tool in the distant past for converting audio in bulk.

The v0.4.0 update fixes the variations in Velocity for "AU Hosted Apps".

The Trimming Start and Both options do not seem to trim the start. I get variable lengths of

silence corresponding to the Note's frequency. When I play a 2-handed 6 note chord voicing it arpeggiates the 6 notes from bottom to top. So, hand-editing is required for every note.

I hope you can solve the trimming of silence detail in a subsequent update. I'd prefer "both" work well but "start" is essential for me to make large multi-layered keyboard samples that involve 100-200 samples.

I did select a Bass app that trimmed "start" perfectly but 2 high quality piano apps failed to generate trimmed samples.

Hmm, I did quite a few runs of sampling from AUs, and did find the behaviour consistent. Do you mean that either the Start or Both options are not working properly, or that neither of them is? They are essentially parts of the same operation, i.e. Both is just Start + End. Anyway, I'll look into them. Maybe my logic is faulty.

Any comments about the normalisation? I got a report saying the level is a bit on the low side. It's straight out of AudioKit, but maybe I'm using it wrong.

I'm trying to make samples from 2 sensitive piano apps. So, my first run is typically velocity 10 or 20. It captures these subtle sounds but the chopping leaves larges silent sections around the sample recording. So, you mentioned (in another thread) that I need to use the "Noise Floor" setting to fine tune the Trim options. I'll keep tweaking using the trim Start and see if I can find a useful setting to have the "Start" trim working.

I'm not sure I want to normalize the samples yet. I need to get the trim working and I'll look into that to see if it is helpful to make a useful instrument. Right now I want to duplicate the sound as produced by the App for a given velocity input in my cloned sample set.

Ok I see how you hooked up the Scarlett but how is it hooked up to the iPad?

You have a usb and midi cables, Audio is coming out of the Ipad? into the scarlett? out of the scarlett into the iPad???? Only seeing one side of this makes no sense to me.

The Scarlett is hooked up to the iPad in the normal way, just using the Apple CCK adapter into the Lightning Port. Basically in just the same way as any audio interface is ever connected to an iPad.

Just noticed that this thread mentions some issues which have been resolved. Nowadays SynthJacker does support either stereo or mono for external synths. You can select any individual input or a stereo pair made of adjacent inputs (like 1+2 or 3+4) on your audio interface.

Ok let me get this straight.

The usb is going out of the Scarlett to the Apple CCK adapter into the Lightning port.

The 3/4 Audio Out from the Scarlett is combined to mono via cable then going into the Scarlett input 1.

I see midi in and out coming from the Scarlett, Where are these going?

the MIDI is looping back: out from the Scarlett and back into the Scarlett.

This is because SynthJacker doesn't support iOS Virtual MIDI, only hardware MIDI, so the MIDI has to go out to the interface and then back in to the iPad to feed the soft synth. With Virtual MIDI in Synthjacker this wouldn't be necessary.

Thank you! I do not have a Scarlett, I have an Mbox Pro for protools so I will see if I can make that work

Does this still require a hardware setup to sample internal iOS synths or has Synthjacker had an update to make this easier?

There are ways.

I've still not gotten around to having a go at this but if you have a DAW that can import SynthJacker MIDI and render audio to stems, @richardyot outlines a method here:

https://forum.audiob.us/discussion/comment/720800/#Comment_720800

AUv3 instruments are supported natively, but anything else (like Inter-App Audio) is handled with the "BYOAF" (Bring Your Own Audio File) feature, as detailed in the posting by @richardyot just linked above.

The DAW you use can also be an iOS/iPadOS one, like Cubasis. Just note that there may be some latency involved before the first note sounds, so maybe insert a dummy note at the start to "prime the pump", and check the results with an audio editor before slicing!

@colonel_mustard @coniferprod

Good info. Thanks guys!

No worries! If you have any suggestions or problems let me know (send feedback from the bottom of the Settings screen).

Hi. Is it possible to get Synthjacker working with BT headphones? I use BOSE noise cancelling headphones often. But when using these the audio from Synthjacker comes out of the iPad speakers. Cheers.